Connect Blob Storage

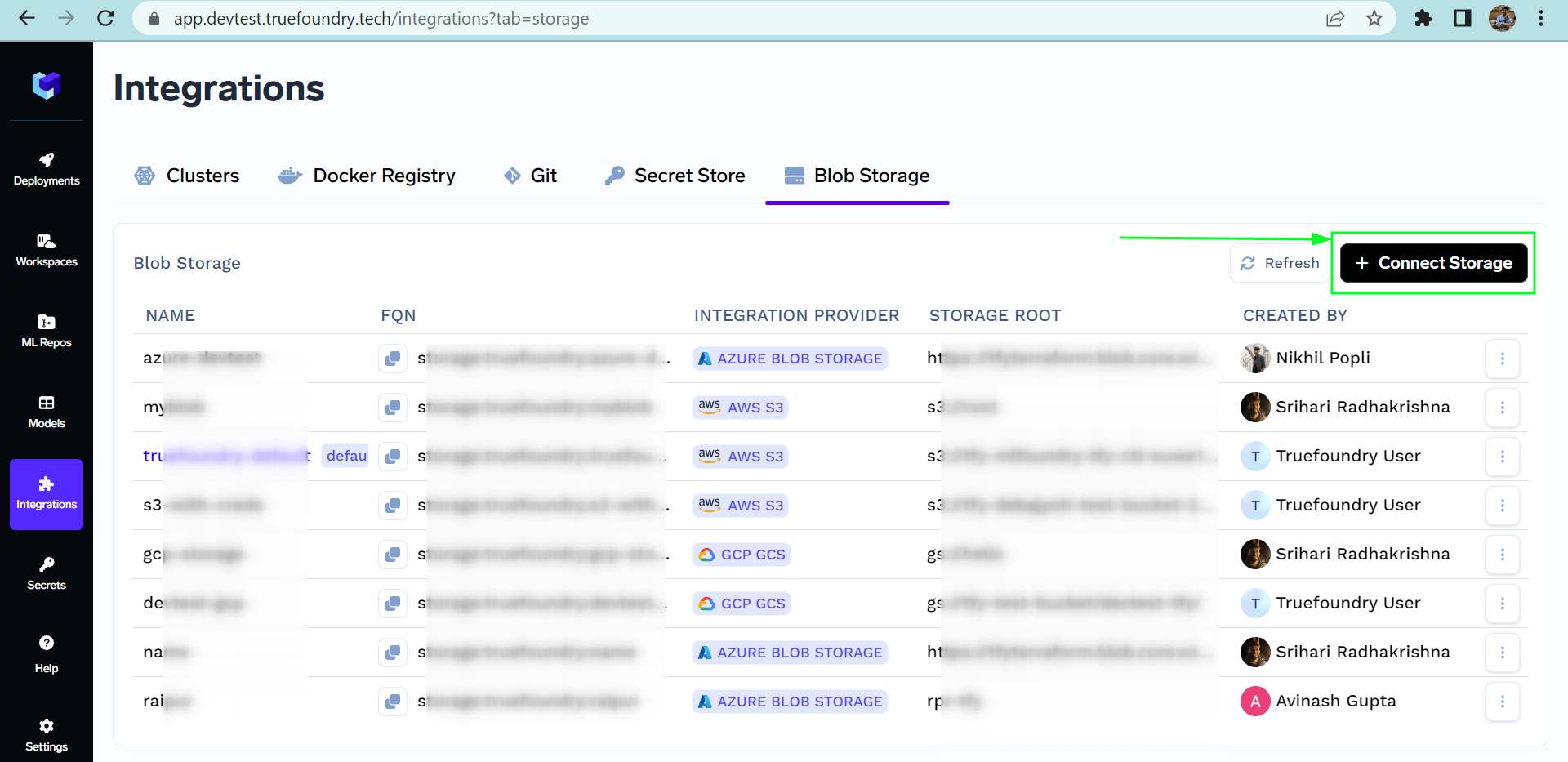

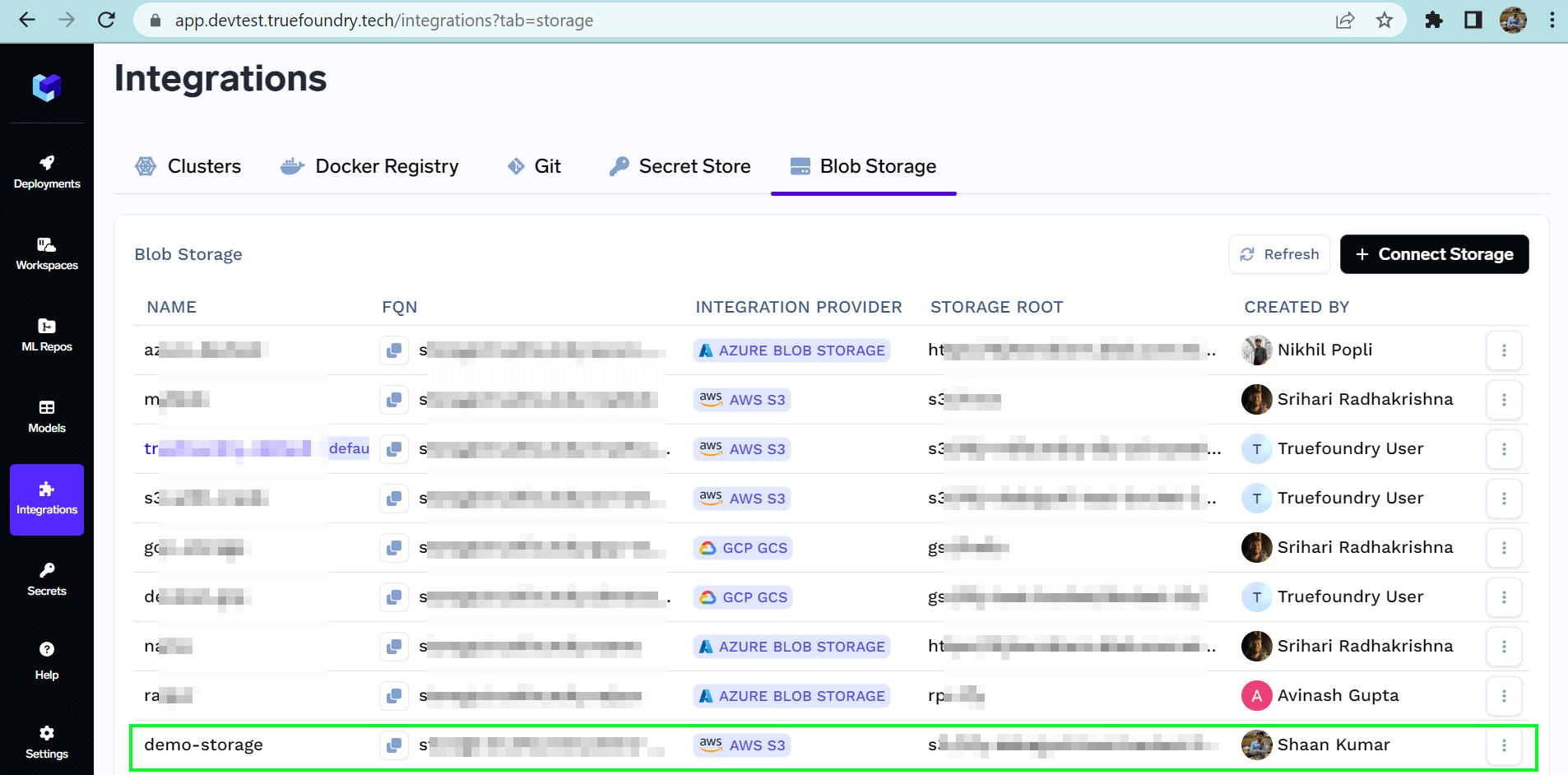

To connect a new storage, one needs to follow the following steps:- Navigate to the

Integrationspage and go to theBlob Storagetab. - Click on the

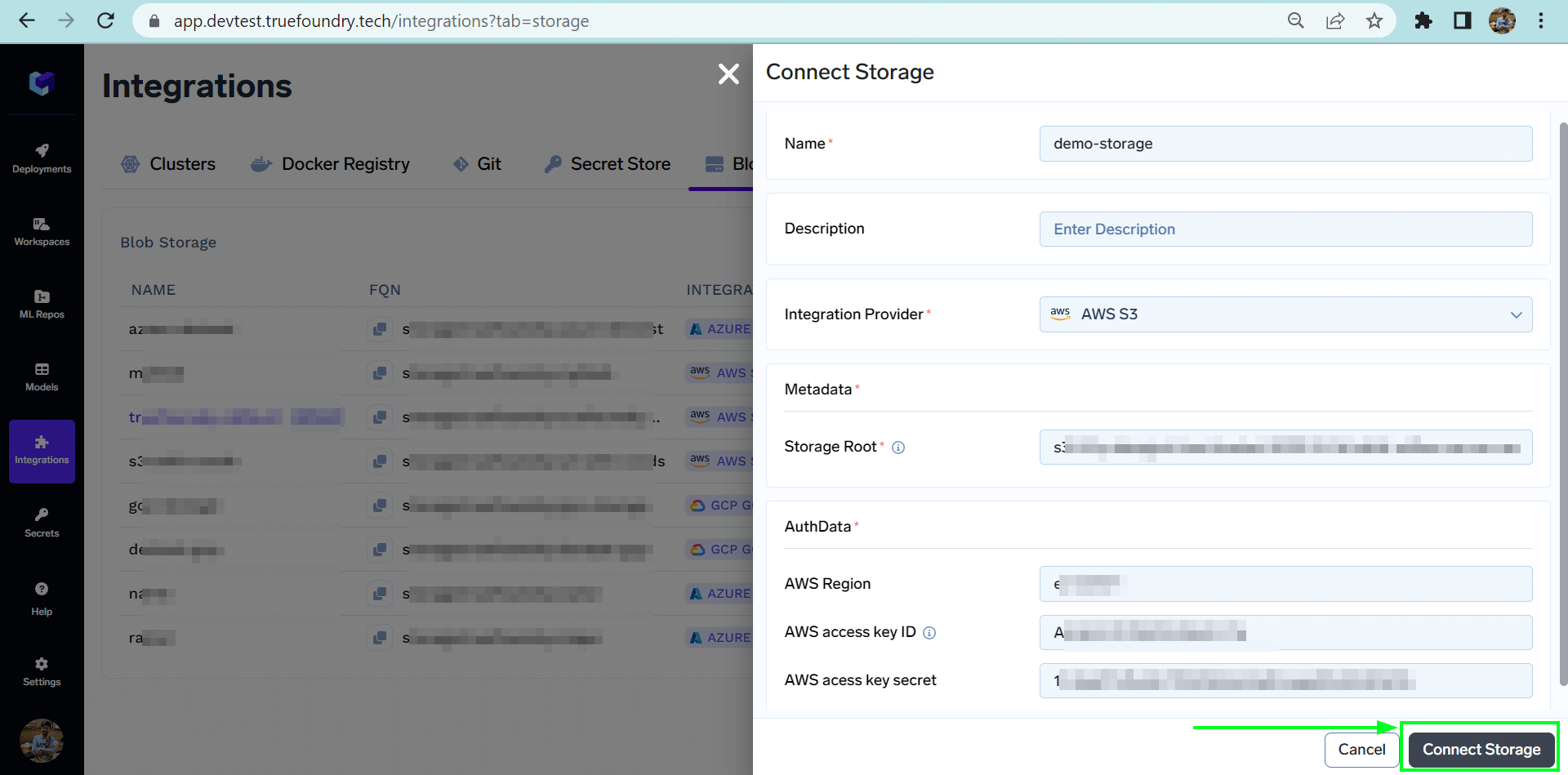

Connect Storagebutton at the top right corner. - Now add the name of the storage you want to connect. Select the Integration Provider.

- Fill in the credentials and storage root according to the selected integration provider.

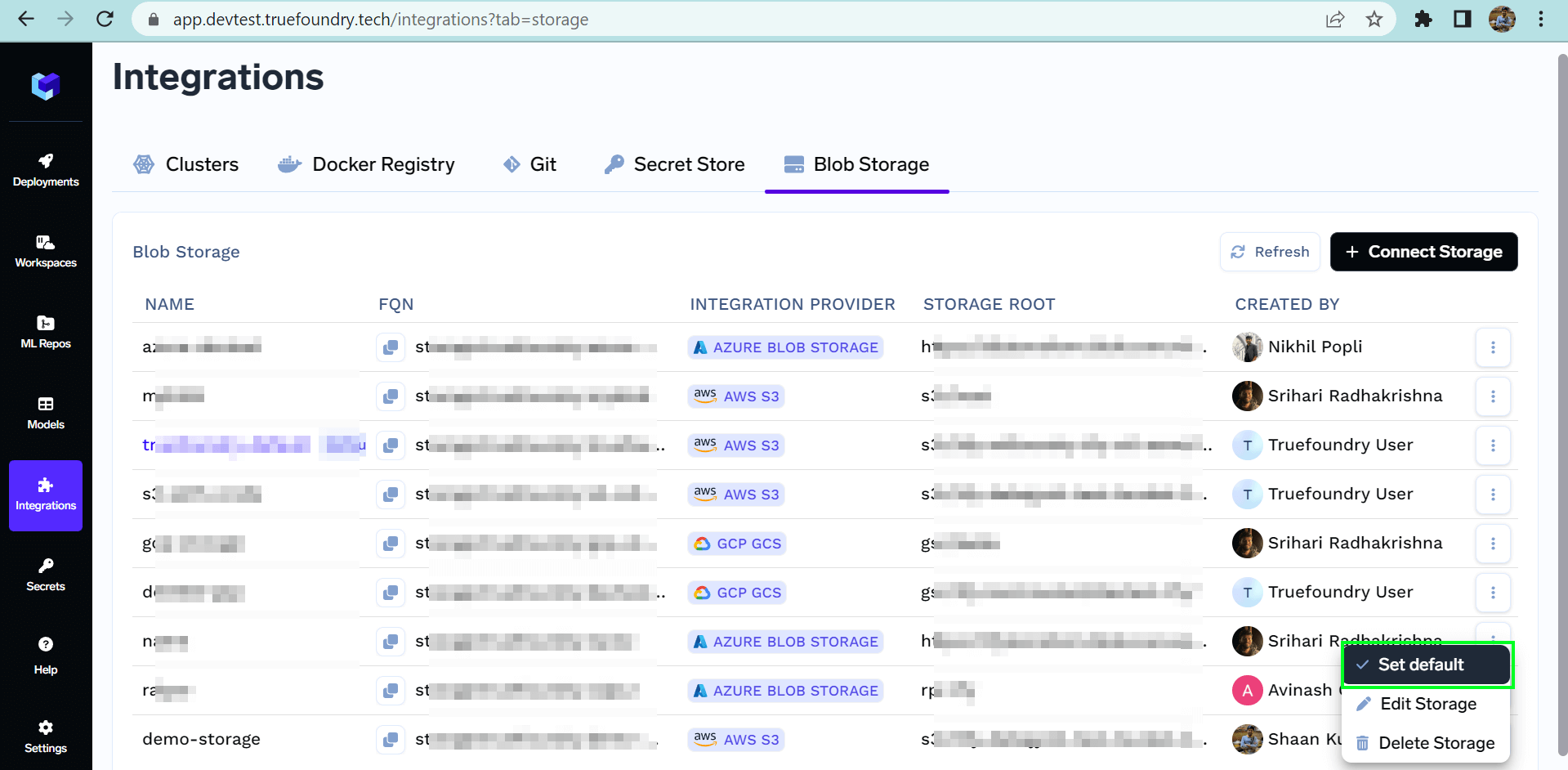

List of all Storage

Connect AWS S3 storage

Follow the steps below to connect S3 storage to TrueFoundry:-

Create a S3 bucket.

- Make sure the bucket has lifecycle configuration to abort multipart upload set for 7 days.

- Make sure CORS is applied on the bucket with the below configuration

- You might have the IAM role for truefoundry already created with the name -

tfy-<short-region-name>-<name>-platform-role-<xxxyyyzzz>, if not create a new one. You can add the following permission to that role. You can also create a user with the permissions below, generate an access key and secret key and integrate the blob storage via the access and secret keys.

- In the region, please provide the region of the blob storage e.g.

eu-west-1 - Navigate to Integrations > Blob Storage tab and then add your S3 by clicking Connect Storage.

Connect Google GCS

Follow the steps below to connect GCS storage to TrueFoundry:-

Create a GCP bucket.

- Make sure to add the lifecycle configurations on the bucket to delete multipart upload after 7 days.

- For this go to GCP bucket -> Lifecycle -> Add a rule

- Select

Delete multi-part uploadfor 7 days

-

We also need to add the CORS policy to the GCP bucket. Right now adding the CORS policy to the GCP bucket is not possible through the console so for this, we will use gsutil

- Create a file called

cors.jsonusing the below command

- Attach the above CORS policy to the service account by running the following command using gsutils

- Create a file called

-

Create an IAM serviceaccount named

tfy-<short-region-name>-<name>-platform-role, if not created before. -

Create a custom IAM role with the following permissions:

- Navigate to IAM & Admin -> Roles.

- Click + CREATE ROLE.

- Enter the name a description, and set the stage to General Availability.

- Click ADD PERMISSIONS and add the permissions listed above

- Click CREATE.

-

Attach the custom IAM role to the service account

- In the IAM section, locate the service account created ealier.

- Click the Edit icon next to the service account.

- Click ADD ROLE and select the custom role you created

- Next to the Role, click on ADD IAM CONDITION

- Type a title, under CONDITION EDITOR tab, type in this condition

resource.name.startsWith('projects/_/buckets/<bucket name>}') - Click on SAVE.

- Once the IAM serviceaccount is created, make sure to create a key in JSON format.

- Navigate to Integrations > Blob Storage tab and then add your GCS by clicking Connect Storage.

Connect Azure Blob Storage

Follow the steps below to connect your Azure blob storage to TrueFoundry:-

Create a Azure Storage account in your resource group

-

Instance details - You must

Geo-redundant storageto make sure your data is available through other regions in case of region unavailability. -

Security - Make sure

- DISABLE

Allow enabling anonymous access on individual containers - ENABLE

Enable storage account key access

- DISABLE

-

Network access - ENABLE

Allow public access from all networks - Recovery - You can keep it to default for 7 days.

-

Instance details - You must

- Create an Azure container inside the above storage account.

-

Search for

CORSfrom the left panel and forBlob service(optional forFile serviceQueue serviceandTable Service, only apply the change if you are using them) select the below options- Allowed Origins -

*or your control plane URL - Allowed Methods -

GET, POST, PUT - Allowed Headers -

* - Exposed Headers -

Etag - MaxAgeSeconds -

3600

- Allowed Origins -

-

Collect the following information

- Standard endpoint - Endpoint of the blob storage Once the container is created we need to get the standard endpoint of the blob storage along with the container which will look something like this. Replace this with your storage account name and the container name.

- Connection string - From the Azure portal in your storage account, head over to the

Security + Networkingsection underAccess keyswhich will contain theConnection String.

- Standard endpoint - Endpoint of the blob storage Once the container is created we need to get the standard endpoint of the blob storage along with the container which will look something like this. Replace this with your storage account name and the container name.

-

Head over to the platform.

- In the left section in Integrations tab, click on Blob Storage and

+Connect Storage - Select the Integration Provider as

Azure Blob Storage - Add the standard endpoint as the storage root

- Add the Connection string in the

Azure Blob Connection String

- In the left section in Integrations tab, click on Blob Storage and