Provisioning the queue

Creating an AWS Simple Queue Service (SQS) queue is a straightforward process that can be accomplished using the AWS Management Console or AWS Command Line Interface (CLI). Here’s a step-by-step guide for creating an AWS SQS queue through the AWS Management Console:

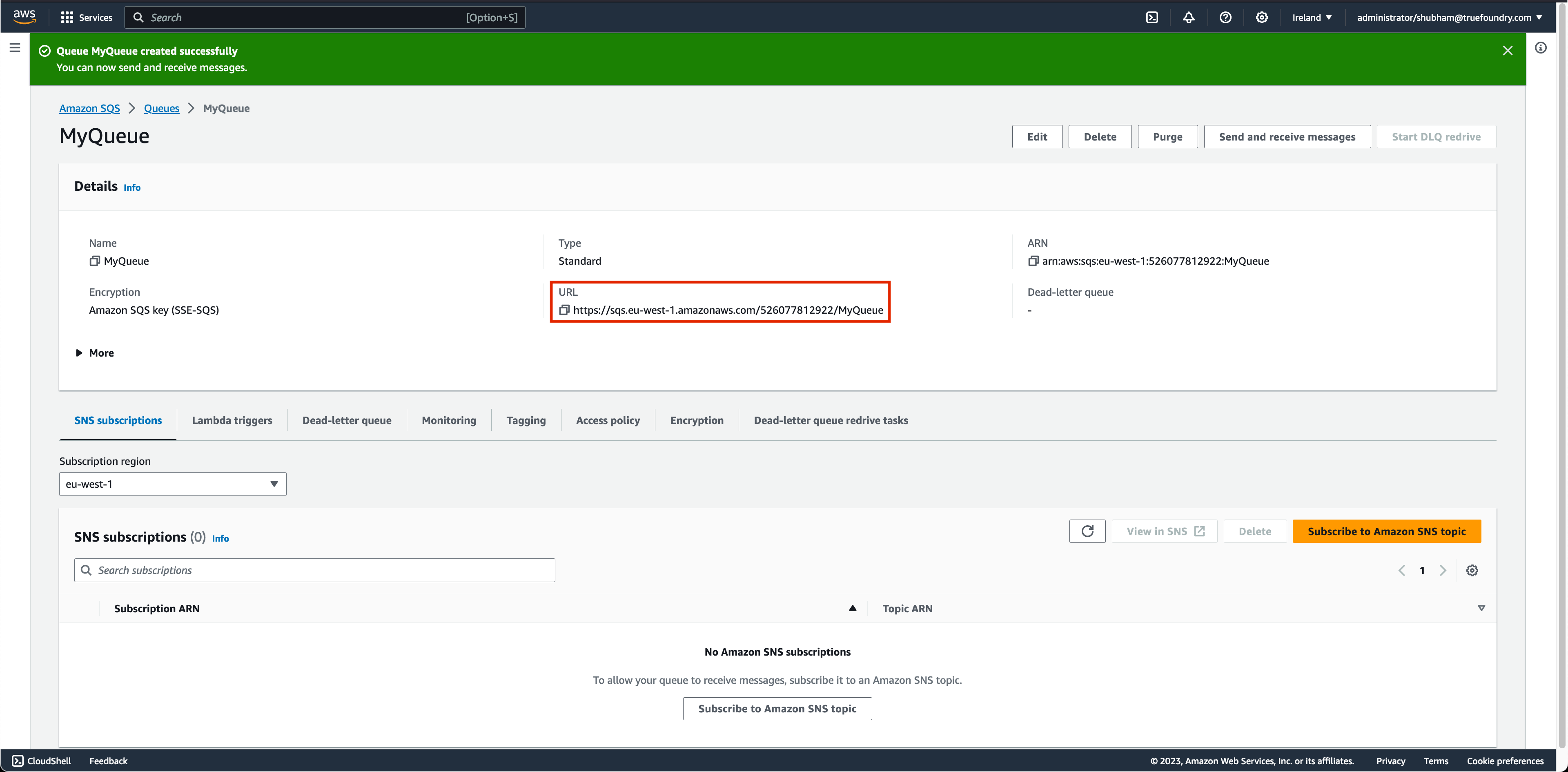

Once you click on Create queue, you’ll receive a confirmation message indicating the successful creation of the queue.

Configuring TrueFoundry Async Service with AWS SQS

You will have to specify these configurations for AWS SQS Input Worker:Configuring Autoscaling for AWS SQS Queue

Note:

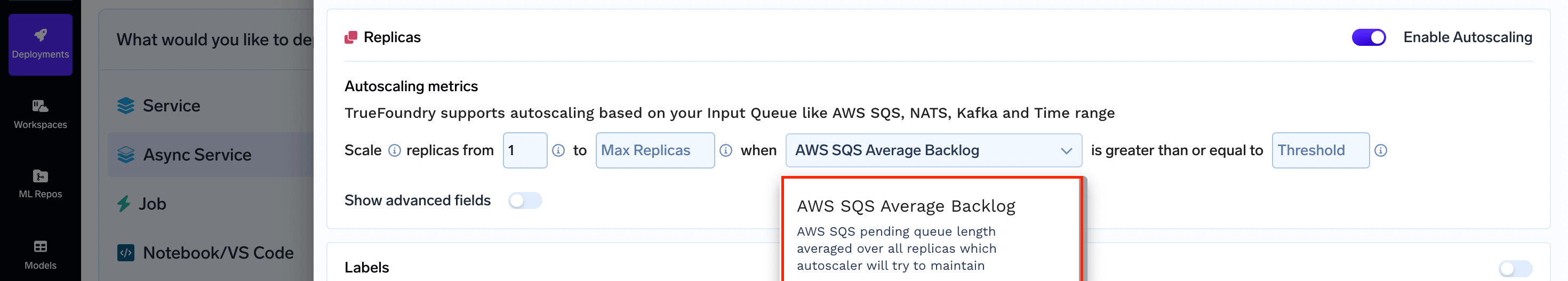

This metric is only available in case you are using AWS SQS for your input queueParameters for SQS Average Backlog

- Queue lag threshold: This is the maximum number of messages each replica should handle. If there are more messages than the threshold, the auto-scaler adds replicas to share the workload.

Configuring AWS SQS Average Backlog

Through the User Interface (UI)

Via the Python SDK

In your Service deployment codedeploy.py, include the following: